Research Topics

We are conducting a wide range of topics centered on spoken language processing and dialogue, emphasizing both theoretical and practical aspects. Below are the general research areas:

- Speech recognition and synthesis

- Spoken dialogue systems

- Natural language processing

- CG agent interaction

- Avatar communication support

In recent years, the emergence of neural networks, particularly large language models (LLMs), has significantly transformed these research fields. Below, we introduce the research content and examples of specific research themes for each area.

Speech Recognition and Synthesis

Speech processing technologies that transcribe speech audio data into text and assign time labels, such as speaker and context, form the foundation of speech media processing. The process involves various information processing technologies, from signal processing to language processing and speech understanding. Lee Lab has been conducting research on speech recognition for years. Our open-source speech recognition engine Julius, developed by PI Lee in 1996, has a history spanning nearly three decades and has been widely used both domestically and internationally.

Recently, we have also expanded our research into speech synthesis for unified speech processing. We are advancing research that integrates speech recognition and synthesis, incorporating cutting-edge techniques such as LLM-based speech synthesis.

- Speech recognition based on data generation via speech synthesis

- Speaker diarization (who spoke when) and its applications

- Expressive speech synthesis (speech-laugh, etc.)

- LLM-based speech synthesis

Recent publications

- Sei Ueno, Akinobu Lee "Beam search considering continuity in LLM-based speech synthesis" ASJ 155th Meeting (Spring 2026), 1-5-3

- Yuuki Yamakawa, Sei Ueno, Akinobu Lee "Rakugo speech synthesis focusing on character portrayal using large-scale models" ASJ 155th Meeting (Spring 2026), 1-Q-31

- Keigo Ichikawa, Sei Ueno, Akinobu Lee "Data generation for speaker diarization based on speaker transition probability" ASJ 153rd Meeting (Spring 2025), 3-P-2

Spoken Dialogue Systems

A simple voice command system can be made by composing speech recognition and synthesis technologies. However, a human-like dialogue system should include much more problem-solving modules to achieve a natural conversation, such as context understanding, recognizing user characteristics, finding conversation goals, devising strategies, detecting errors or mis-understanding, etc.

While traditional research has focused on individual modules, recent advancements in neural networks allow end-to-end approaches that learn these processes collectively. The recent large language models (LLMs) have especially improved their accuracy significantly. Multimodal dialogue systems are also effective for engaging, context-sensitive interactions. A camera input can provide assessment of overall situations, and verbal or non-verbal responses with gestures and emotions make the dialogue more intuitive.

Building on our extensive experience in practical machine dialogue systems, we collaborate with companies and research projects to conduct comprehensive research on spoken dialogue systems. We are developing next-generation dialogue systems centered on the multimodal dialogue platform Remdis, leveraging LLMs.

- In-car voice information guidance systems based on LLMs (in collaboration with an automotive company)

- AI simulated patients and automated medical interview evaluation (in collaboration with Fujita Health University)

- Remote communication support using information-extracting agents

- Spoken dialogue systems as media content

Recent publications

- Yu Kaneko, Sei Ueno, Akinobu Lee "Motivational interview system emphasizing awareness of ambivalence" NLP2026, P5-20

- Takumi Yoshida, Sei Ueno, Akinobu Lee "Immersive voice dialogue system using virtual simulated patients for medical interview education" HAI Symposium 2025, P1-8

Natural Language Processing

Natural language processing (NLP) is a core technology for dialogue systems that determines how to manage conversation in the text domain. Recently, NLP has progressed dramatically through huge neural networks and large language models. Our lab focuses on the field of NLP with particular emphasis on “human-to-human dialogues.”

- Online motivational interview systems

- Dialogues aimed at clarifying and verbalizing user thoughts

- Narrative processing: extracting character relationships, generating summaries

Recent publications

- Kaho Suzuki, Sei Ueno, Akinobu Lee "Introspection support through LLM dialogue system based on divergent and deep-dive dialogue strategies" NLP2026, P5-17

- Yukito Minari, Sei Ueno, Akinobu Lee "Realization of TRPG game master system by LLM-based multi-agent" NLP2025, P10-17

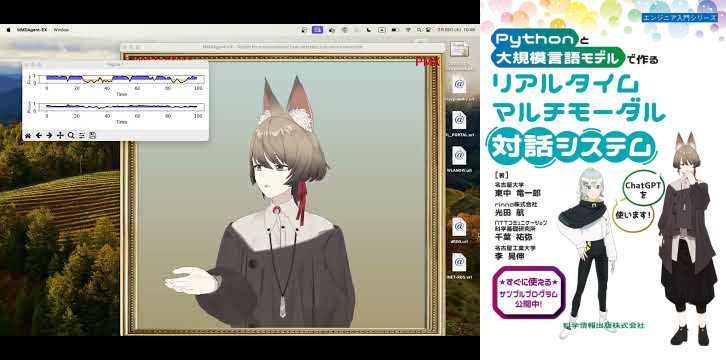

CG Agent Interaction

When having conversations with machines, humans always prefer human-like appearances since it is a natural way. Our lab focuses on dialogue systems using CG characters with full-body representations capable of physical communication (aka ECA: Embodied Conversational Agent).

While conversation technologies have emerged to give users more chances to speak to machines, most people still feel strange talking to a machine, and conversations never feel truly “human-like.” Our lab has a fully integrated original CG-agent system that combines speech recognition, synthesis, and dialogue technologies with a CG agent rendering engine. We conduct wide-ranging research from autonomous CG agents serving as AI front-ends to CG avatars operated by humans.

- Physical communication support using CG avatars

- Automated generation of behavior and body movements

- Design theory of CG agents

- Design, creation, and publication of CG agents

In particular, we aim to eliminate discomfort in dialogues with CG agents, enabling effortless, human-like conversations.

- Perception of “being an appropriate conversation partner” (dialogue-perception)

- CG-specific conversational styles

- Affordances for dialogue

- Spatial immersion using self-projection avatars

- Virtual social touch

Recent publications

- Hibiki Inaba, Sei Ueno, Akinobu Lee "Integrating CG-like and human-like behavioral expressions in voice dialogue agents" HAI Symposium 2026, P1-22

- Koki Yamada, Sei Ueno, Akinobu Lee "Effects of pseudo social touch in dialogue systems using CG agents" HAI Symposium 2025, P2-45

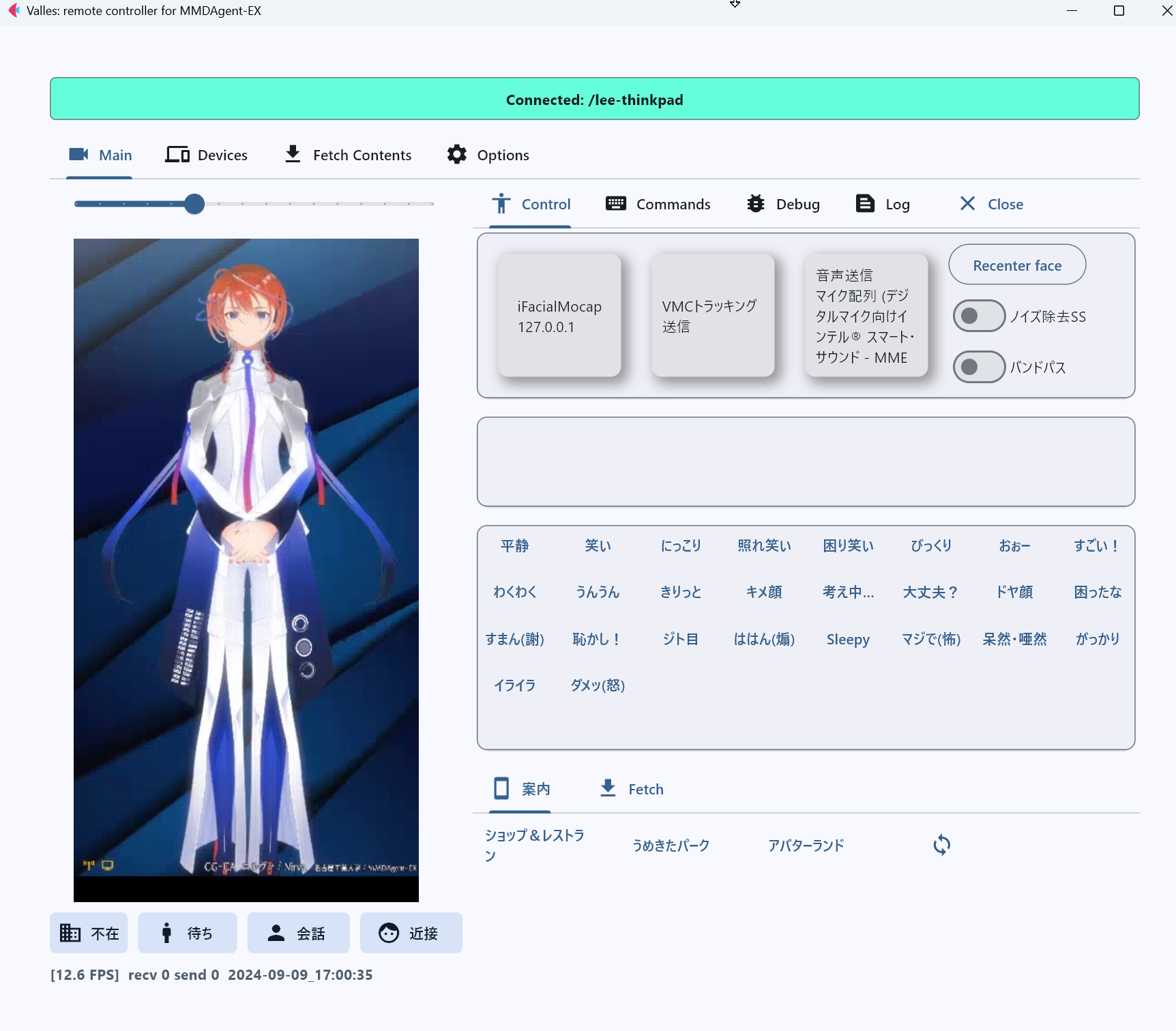

Avatar Communication Support

Research on “avatars,” where humans remotely operate robots or CG characters to make conversations and activities, has been ongoing in various fields. Recently, it has become more prominent in online culture, as seen with VTubers who use CG avatars to communicate. Enabling virtualized conversations with CG avatars is promising as it can serve to relax people’s time and place constraints, protect privacy, and support social participation for people with mobility issues.

Despite its potential, conversations using CG avatars still face challenges and limitations, preventing widespread adoption. Our lab leverages its long-time expertise in CG agent dialogue systems for human-machine dialogue to study CG avatar communication support.

Under the Moonshot Research and Development Program for realizing an avatar-symbiotic society project, we aim to create and socially implement an avatar system that anyone can use, anytime, anywhere.

- Research and development of an integrated CG avatar operation system (avatar1000 system)

- Defining conversational styles and requirements mediated by CG characters

- Real-time behavior generation from speech: Speech2Motion

- Collection of avatar operation corpora

Recent publications

- Soma Suzuki, Sei Ueno, Akinobu Lee "Real-time conversational motion generation from speech for avatar communication support" HAI Symposium 2025, P1-15